Building a Great Experience in Amplitude Experiment

Here are the newest updates to A/B testing in Amplitude Experiment, informed by your valuable customer feedback.

We released Amplitude Experiment in June of 2021 with the mission to help our customers make better product decisions and measure the impact of those decisions. For years, companies leveraged Amplitude Analytics’ power to understand customer behavior better and identify areas in their product requiring attention. With Experiment, customers can take rapid action against those insights by planning, executing, and analyzing sophisticated experiments to make better decisions that drive impactful results.

We’ve partnered with customers to make A/B testing and experimentation even easier. You’ve used Experiment to differentiate yourself in highly competitive markets, drive higher customer engagement, and make data-driven decisions faster. You’ve let us know what you love about Experiment and where we need to focus our attention next. Thank you for helping us build something that’s changing how companies define their products.

We’d like to share improvements over the past three months in Experiment and a few things we’re looking towards in the future.

Making feedback even easier

We value the feedback you provide us—so much so that we’ve added a new widget to Experiment, allowing you to provide feedback more efficiently. Clicking on the Amplitude logo in the bottom right corner of your screen will bring up the widget, where you can give feedback directly inside the app. The new digital customer success center allows you to search our Help Center, visit documentation, open support tickets, and provide direct feedback.

Delivering impactful product changes

New Experiment Lifecycle

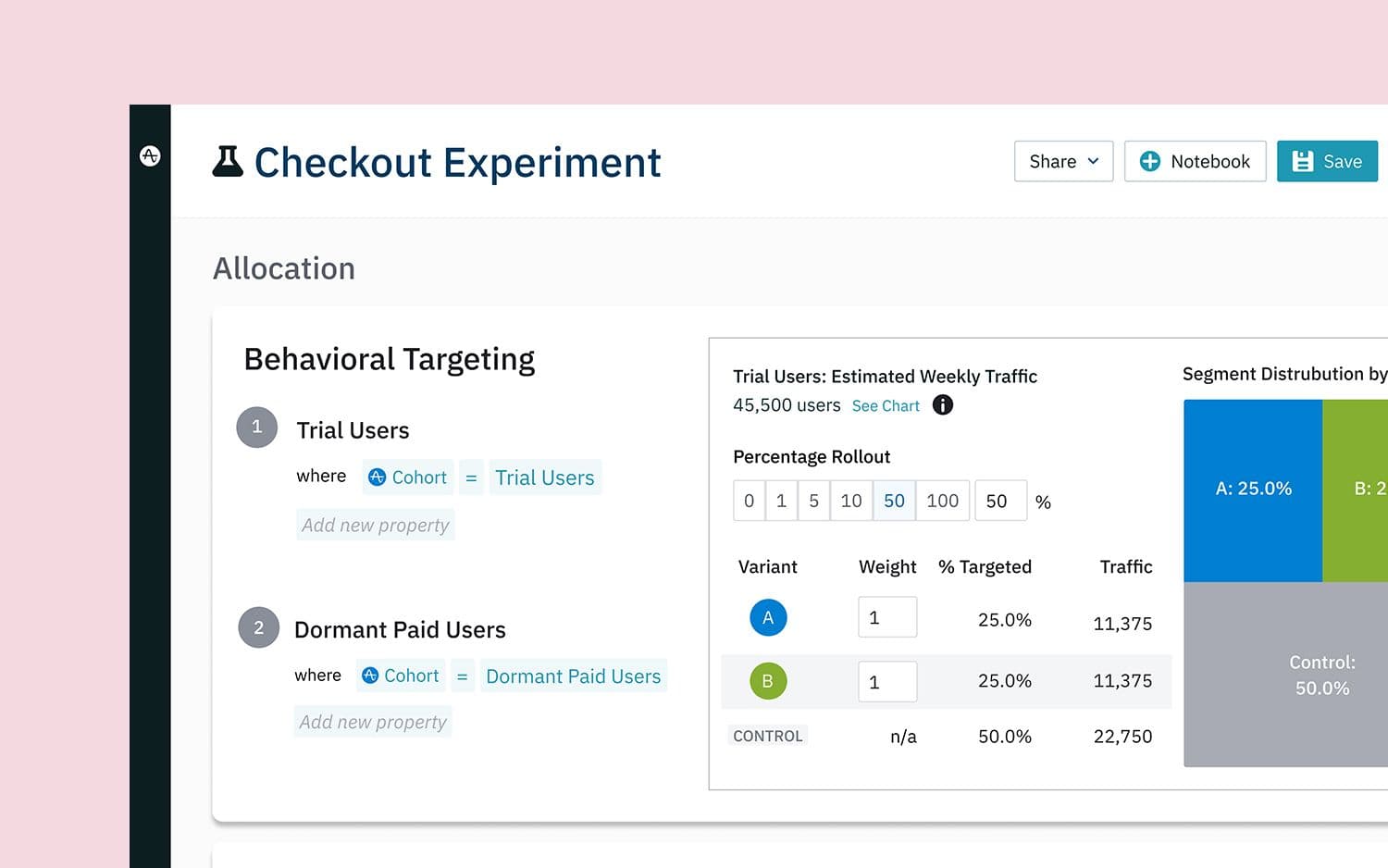

Experiments in Amplitude have three primary phases: Plan, Run, and Analyze. We overhauled the presentation of those phases to simplify the experimentation process, providing a better flow and organization to your experiments.

Better goals and takeaways

As part of the Plan and Analyze phases, we improved the hypothesis creation and analysis summary.

You’ve shared that experimentation is used to understand if a product change had a measurable impact on an outcome that matters to you most. To help clarify, we improved the success metric in the planning phase with a Minimum Detectable Effect (MDE), which is the metric you want to impact and the change in that metric you want to see (e.g., Increase subscription purchases by 5%).

Once the experiment concludes, we present new “Takeaway” cards that recommend what you should do next based on whether you achieved your MDE and whether the experiment had enough data to show causality.

Easier experimentation implementation

We’ve heard from you that engineering teams are already resource-strapped trying to deliver new features and enhancements, so anything new to the development process must accelerate engineering efforts in the long run. We believe any experimentation platform must be easy to implement and maintain, not impact product performance, and easily fit into the existing development stacks.

Integrated SDKs

Client-side SDKs now support seamless integration between Analytics and Experiment SDKs. Integrated SDKs simplify initialization, enable real-time user properties, and automatically track exposure events. Correct implementation of experimentation is essential to having confidence in the experiment results. A missing user ID or out-of-sync device ID can change a user’s experience or throw off experiment analysis, often the hardest to solve. The integration update should alleviate three common challenges faced during onboarding and implementation: initialization complexity, user property targeting, and client-side exposure tracking.

Improved exposure events

Proper exposure tracking helps drive accurate results in any experiment program by correctly communicating which users saw the experiment and should be included in the analysis. We’ve switched how we’re tracking users’ exposure to experiments. We now monitor for the actual exposure event in your product sent back to us, ensuring your user saw the experiment. This change improves analysis accuracy and reliability by removing the possibility of misattribution and race conditions caused by Assignment events.

A happier product experience

In addition to delivering significant improvements to the product, we’ve addressed a few minor things to make your experience just a tiny bit better.

- Fixed browser compatibility, especially for smaller screens

- Fixed errors when changing from flag to experiment mode

- Improvements to the management API

- Made data ranges consistent across all charts in the analysis section

- Added progress checks in the analyzing state

What’s next?

We’re excited to see our customers using these new enhancements and getting a great experience from Amplitude. We have some big things we’re excited about this year focused on more statistical analysis methods, better automation and simplification of experiments, more self-service control, and always a continued focus on ease of use. A few of the many things you can expect from us include:

- Building in new tests to detect and notify if experiments are dealing with insufficient data

- Benefiting B2B teams by introducing new algorithms to run experiments against accounts and significantly reduce variance for small sample sizes

- Introducing additional language support for SDKs

- Enhancing the ability for flag management APIs to create, configure and read experiments and feature flags

- Building notifications and automation into the workflow to allow teams to plan, run, and understand more experiments in less time

- Working across teams at Amplitude to create new metric types to serve marketing and growth teams

And we might have something new to share at Amplify!

Please join us at Amplify this year to get hands-on with Experiment, talk to our product team, and ask us any questions about experimentation!

RJ Gazarek

Former Group Product Marketing Manager, Amplitude

Formerly Group Product Marketing Manager at Amplitude, RJ has been in product marketing for 10 years working at tech companies of various sizes, largely in either Cybersecurity or the Dev/DevOps spaces. He has a strong focus on B2B, Midmarket & Enterprise, and PLG motions.

More from RJ