How to Use Amplitude Experiment for A/B Testing

Follow these three simple steps to run laser-focused A/B tests and improve the digital customer journey with Amplitude Experiment.

Originally published on December 13, 2021

Analytics and A/B testing are considered two of the most effective methods to improve conversion rate optimization (CRO).

It’s easy to understand why. Both provide insight into what’s working with your marketing and sales funnels and what’s not. It’s this information that enables you to implement the most impactful changes to the customer experience and increase conversions.

The only trouble is, according to the aforementioned study, one in four CRO professionals say that creating better processes is their number one challenge. They struggle to determine what exactly to test and in what order. And at the end of the day, meaningless tests equal meaningless results equal meaningless optimizations.

There is a solution: Stop wasting your time with arbitrary tests and use Amplitude Experiment to run laser-focused A/B tests. With Experiment, you can implement valuable tests at the right points of your funnel based on user data and behaviors.

Run an A/B Test with Amplitude Experiment (in 3 Simple Steps)

Product development and marketing teams use Amplitude Experiment to analyze and improve the end-to-end user experience. When you have analytics, customer behavior, and A/B testing all in one place, you get to improve the entire customer journey from conversion to customer loyalty.

Running an experiment with Amplitude comprises three simple steps:

Step 1: Design

With Amplitude, you don’t just start the experiment and hope for the best. You use the carefully curated workflow to outline the problem, provide supporting evidence, and formulate a hypothesis for your A/B test. In other words, design a more meaningful test.

Amplitude Experiment walks you through A/B test design step by step.

A/B testing with Amplitude is all about the customer data. It’s a fully integrated system. So you’ll use evidence from Amplitude Analytics to support your hypothesis and measure the results of the test.

All of the above reduces the need for the multiple disparate tools you may have used to design A/B tests in the past, e.g. analytics, usability testing, customer feedback tools, and so on.

Step 2: Rollout

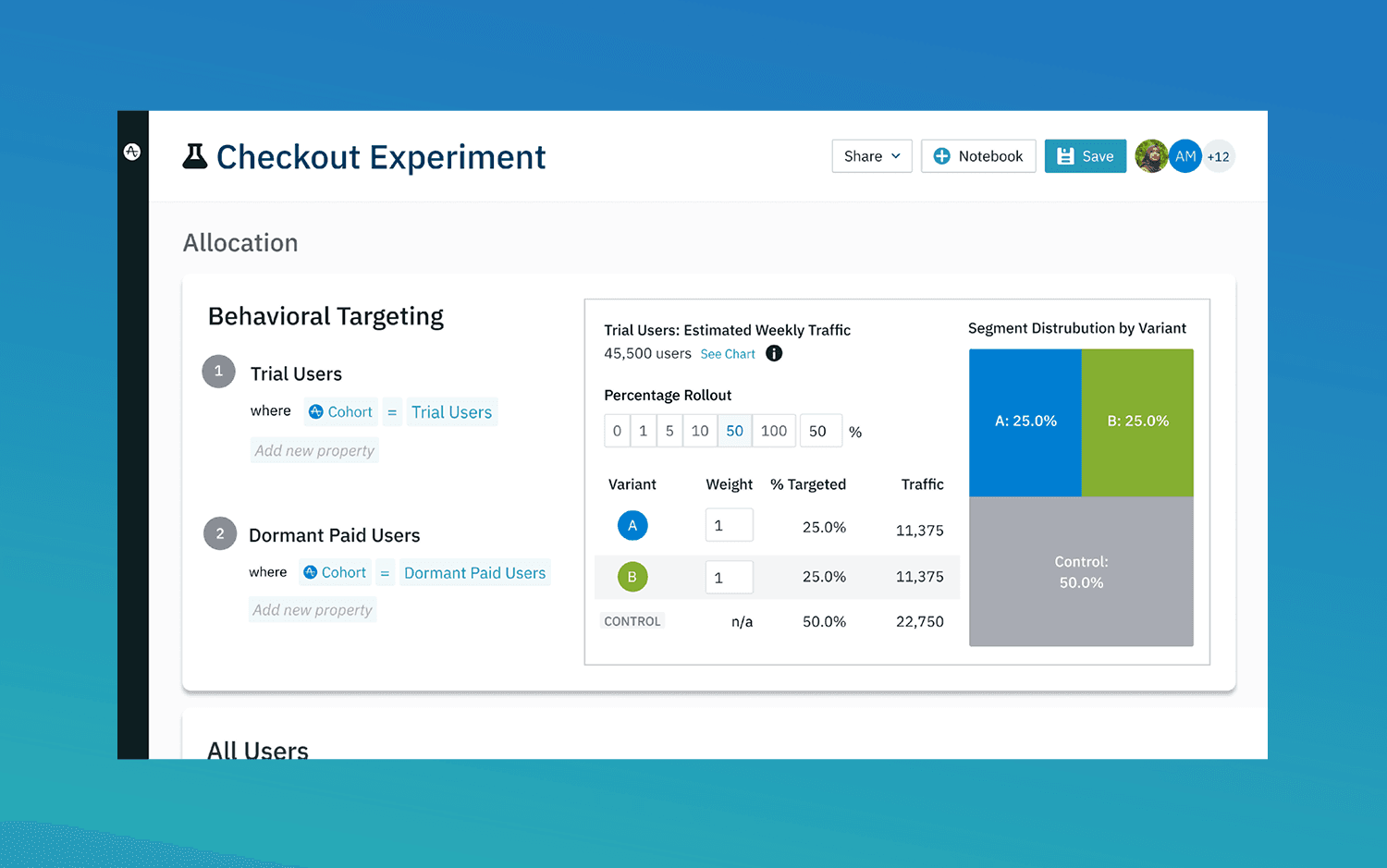

Choose a control plus variant versions of the page, screen, or design elements you wish to test. There’s minimal development work involved as Amplitude takes care of the code that initializes your tests and captures the results.

Test variants for your app, website, or digital product with minimal development work.

To focus your test even further you have the option to target particular segments. For example, the experiment might only trigger when a user completes a certain action.

Amplitude also resolves users’ identities across devices and platforms. This means you’re able to maintain a high level of consistency and accuracy throughout experiments.

Step 3: Learn

Get real-time results and use those results to make adjustments to your website or app quickly. This, of course, helps your team be more agile and over time leads to greater customer retention. For instance, you might fix a user experience issue and therefore prevent frustrated users from uninstalling your app.

What might be even more interesting, particularly for marketing and growth teams, is to use the product to address bigger questions related to CRO.

Amplitude experiments have greater depth than your average A/B test. The product allows you to test more than just one small aspect of the customer experience. It allows you to see A/B performance at every step of the funnel — instead of only giving you one metric about how the funnel converts at its end. You’ll get a granular analysis that uncovers opportunities for improvement that you’re not able to see with most analytics tools.

Find and Remove Roadblocks

The issue with most A/B testing tools is that they merely show whether users have converted or not. So you may learn that a particular variant yields more conversions. However, there’s little indication as to why, and you fail to gain an understanding of customers and why they behave how they behave. There are no deeper insights you can utilize to increase conversions across the board.

Amplitude, however, allows you to take a deep dive into user behavior. Add your funnel(s) to Amplitude and you’ll see how your control and variant groups move through your funnel. More importantly, you’ll see exactly at which step users drop off during an experiment.

The A/B test view within the Funnel Analysis chart displays user movements through your funnel.

In blue, we have the control version of a page and in green, the variant. So you can see precisely how many users made it through each step of the funnel for both the control and variant. You can see, for instance, that the difference is relatively small in funnel step one, and our optimization work will likely yield much bigger improvements if we focus on reworking steps two, three or four.

But, what exactly can you learn from an experiment like this?

Let’s say for instance you have a landing page that asks users to sign up to receive your lead magnet. The funnel here may look something like this:

- View landing page

- Click CTA button

- Complete quiz

- Complete sign-up form

Let’s say the control and variant levels remain equal in step two, but the variant performs better in step three. Now you know precisely where the problem with your funnel lies.

So, A/B testing in this way allows you to find and fix roadblocks that prevent users from making it to the next stage of your funnel. And once you fix issues at one stage of your funnel, you can go on to run further experiments, to retain even more users in the next stages of the funnel and so on.

Just be sure to start with the experiments that are likely to yield the best results. At McGaw, we developed the VICE framework for A/B testing (velocity, impact, confidence, ease).

McGaw’s VICE Framework for A/B testing helps you score your hypotheses.

We use the descriptors to rate the likelihood of a favorable outcome for each of our proposed hypotheses. This allows us to prioritize the most important A/B tests that’ll help increase conversions right away.

Gather Enhanced Data

Other analytics products are further limited in that you don’t get to see the complete customer lifecycle. There’s no big picture.

Amplitude provides all of the information you need in one place. There are real users behind the numbers you see in an experiment. And Amplitude is able to provide information on specific leads and how they behave in an experiment.

View user information and activity stream to get a deeper understanding of what might be driving behavior.

Furthermore, as you can see above, this user doesn’t seem to be engaged with the site they’re visiting. For instance, they were last seen “a month ago” and only visited for one session.

This set of information could provide further insight as to why a user dropped off in an experiment. For instance, it may not be the size of a button that matters for them at all. It may be that they don’t represent your target user so they’re looking for a different solution to what you provide.

You’ll also be able to see the lead status of users that take part in the experiment, as you would in your CRM. See if there has been follow-through after the experiment and whether the lead has produced revenue for your company. The reason is that Amplitude understands that there’s more to A/B tests than measuring statistical significance.

All of this broadens the context of your experiments and allows for a greater understanding of what’s going on with your customers from a CRO perspective. You can see the customer journey from start to finish and where the results of your A/B test fit in as a vital part of that journey.

Run Better A/B Tests

Using Amplitude, you have the opportunity to make every A/B test count. Learn how your funnel is performing in intricate detail and get a high-level view of your process to optimize your conversion rate.

What are your best next steps? Download our VICE A/B testing framework template above to help you make smarter choices when it comes to which hypotheses to test first, then implement Amplitude for your marketing optimization.

Also, if you found this post useful, get in touch with McGaw for a free consultation. We’ll help you create an effective MarTech stack that incorporates Amplitude so you can start implementing more valuable A/B tests ASAP.

Andrew Seipp

Director of Growth Marketing, McGaw.io

Andrew Seipp is the Director of Growth Marketing at McGaw.io. He creates and implements strategies that are fully automated and scalable using tools like Hubspot, Marketo, or Autopilot. Andrew has years of experience driving success for clients, and Amplitude is one of his favorite tools of the marketing trade for pushing the growth needle.

More from Andrew