Why VoC Is Not Enough

Companies seeking to stay competitive in what is becoming a rapidly digital-first world need a more comprehensive view of their customers’ experiences.

The idea of listening to customers is as old as business itself.

Over time that practice, formalized as “Voice of Customer” (VoC), has come to include interviews, surveys, and focus groups. And, in more recent years, there has been an effort to bring those techniques online with post-purchase intercept questionnaires, feedback forms, and follow-up emails.

These approaches typically gauge the effort customers had to exert to do business with a company, the likelihood of their recommending its products or services, and their overall satisfaction with their experience. (The acronyms for popular VoC metrics—CES, NPS and CSAT, respectively—may be more familiar.)

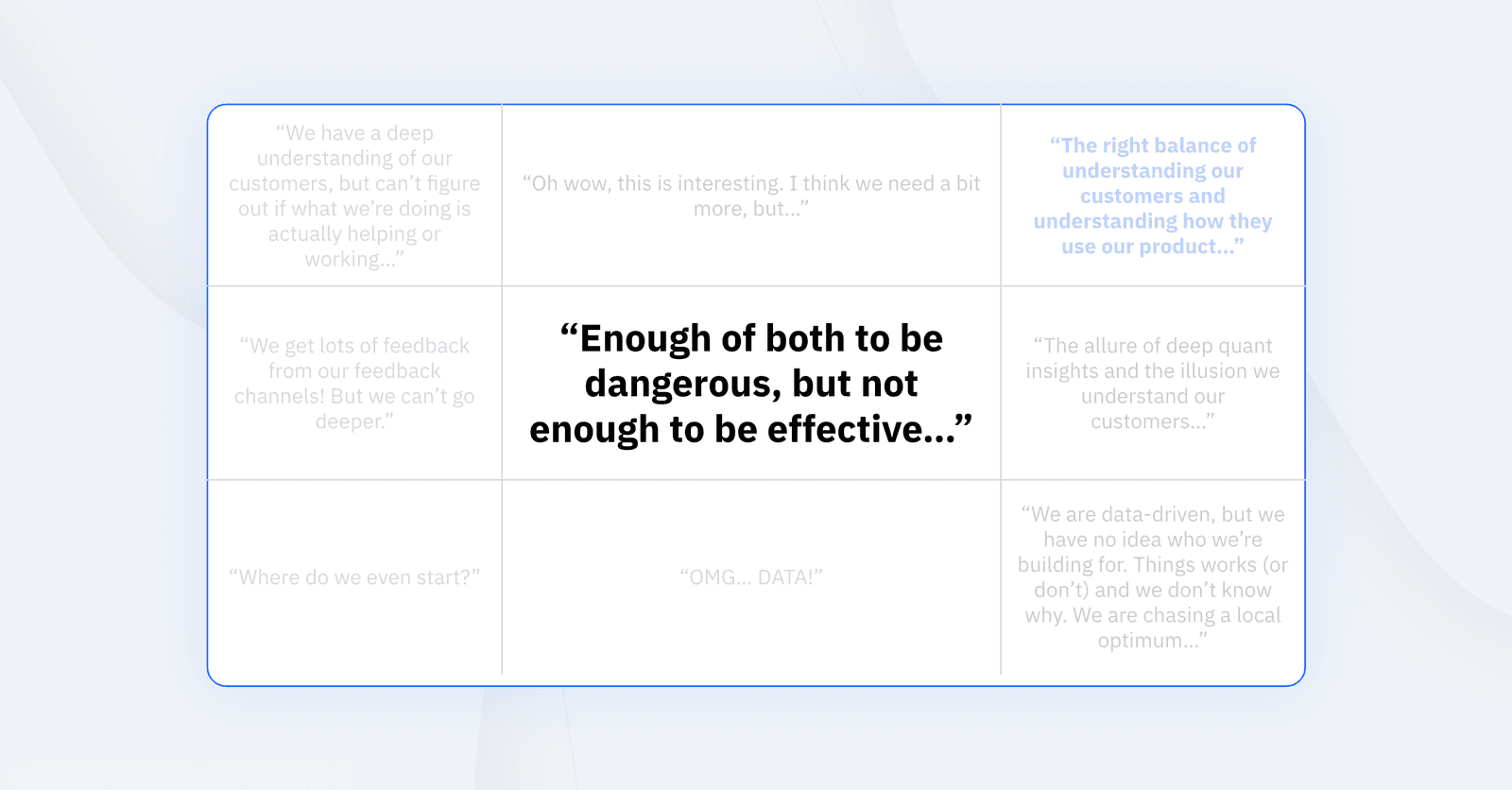

When it comes to understanding and improving customer experience, there is no question that feedback is important. But is VoC data enough in today’s increasingly competitive digital environment? Our work with some of the world’s top digital teams suggests the answer is no.

That conclusion is mirrored by a recent Harvard Business Review Analytic Services survey of nearly 300 executives that found only 16 percent of respondents consider net promoter score (NPS) a reliable indicator of long-term success, reflecting a major shift away from using customer sentiment to measure experience.

An Incomplete Snapshot

When the term VoC was coined in 1993, methods like surveys and focus groups were among the only ways businesses could hope to understand their relationships with customers. A lot has changed since then. There are more and more digital-first businesses. And even among companies that traffic in non-digital products and services, there are fewer distinctions between digital and analogue, brick and mortar, online and offline.

Of course, VoC has continued to proliferate with new technologies that connect with customers online. Yet the feedback gathered will often say little about how customers are actually using a product or service and what might cause them to return—or not. That’s a major problem. As author and co-founder of Mule Design Erika Hall observed in a Medium post, customer loyalty is, after all, habit. “And to be more successful creating loyalty,” she wrote, “you need to measure the things that build habit.”

“To be more successful creating loyalty, you need to measure the things that build habit.”

Erika Hall, author and Mule Design co-founder

By and large, VoC doesn’t do that. While it’s deployed across a broad range of channels, it often takes small, point-in-time samples, without the context that would make them meaningful. The result misses the complex behaviors that link customer experience.

To use a decidedly non-digital example, take that restaurant staple, the comment card, which asks patrons to rate their dining experience. How different would those answers look in light of such information as whether it was the diners’ first or 10th visit, what they ordered, and how busy the restaurant was? Or if the card was used more strategically and only distributed to first-time diners? Even if VoC efforts do manage to capture valuable feedback, it is scored and aggregated in a way that makes it challenging to translate into action.

Perhaps more troubling still is the way some VoC methods use numerical rankings, often asking customers to assign satisfaction scores or predict future behavior on a 0-10 scale, placing the method in a pseudoscientific gray area. Jared Spool, author and principal of the firm User Interface Engineering, summed up the problem in an edition of his UX Strategy Newsletter.

“We can’t reduce user experience to a single number,” he wrote. Rather, he continued: “Customer experience is the sum total of all the interactions our customers have with our products, sites, employees, and the brand. Every sequence of interactions will differ for every customer.” In her Medium post, Hall agreed, writing, “Surveys are the most dangerous research tool—misunderstood and misused. They frequently straddle the qualitative and quantitative, and at their worst represent the worst of both.”

The sort of generalized data VoC provides won’t go very far toward improving customer experiences, especially when it lands on the desks of cross-functional product teams, filtered, summarized, and out of any behavioral context. Quickly converting that data to product changes is only one half of the problem. There is also the matter of assessing their impact.

As Richard Banfield, VP of Design Transformation at InVision, said of VoC: “Used on its own, it’s a reasonable tool for getting customers’ descriptions of their pains. What the VoC won’t do is tell you what the solution might be. For that, you need to run experiments to test if there’s a good fit between the problem and solution. Experiments provide you with the data you need to shape the solution. Ideally this would happen on a daily basis, as part of regular design and development work.” That’s why he sees VoC as a “single tool in a UX utility belt full of great tools.”

What Else Do Great Teams Do?

Based on our observations, in addition to VoC, the most successful teams do the following:

- Capture granular behavioral data that describes the complex relationship spanning the customer journey

- Embrace both deep qualitative and quantitative research and analysis methods

- Task cross-functional teams including designers, developers, and product managers with learning about customers and their needs, aligning their goals around customer loyalty

- Understand how individual and connected behaviors directly impact key metrics around engagement, retention, and customer value. And leverage that information to determine the right product metrics to track and work to influence.

- Use that data to personalize and customize the product experience to suit the specific needs of specific groups of users

- Learn as fast as they ship, and ship as fast as they learn

- Close the feedback loop by immediately implementing changes and measuring their impact in as little as hours

Amplitude customer Peloton, for example, has found tremendous success by paying close attention to its customers’ behaviors—and listening to customer behaviors outside the product. When the company’s multichannel VoC efforts found that online member groups were organizing their own training clubs, the company saw an opportunity to build on that organic movement with new, community-minded product offerings. By running a series of experiments and carefully monitoring impact, the company eventually found a winner: the addition of a hashtag feature. That one, simple innovation proved remarkably effective. Today, more than 50 percent of Peloton members use tags, well above the 20-percent benchmark the company had set.

“It’s all very, very exciting and it kind of brings a level of personality and community to the platform that we didn’t have,” David Packles, Director of Product at Peloton Initiative, shared at Amplitude’s 2020 Amplify conference. “These organic behaviors are so, so powerful. And there’s so, so much we can learn from them.”

By blending those sorts of VoC efforts with advanced product analytics, businesses have a real opportunity to create value for the customer and themselves. The merits of various VoC metrics and approaches may be debated, but what’s worrisome is when organizations rely solely on VoC while keeping their developers and designers at arm’s length from their customers.

John Cutler

Former Product Evangelist, Amplitude

John Cutler is a former product evangelist and coach at Amplitude.

More from John