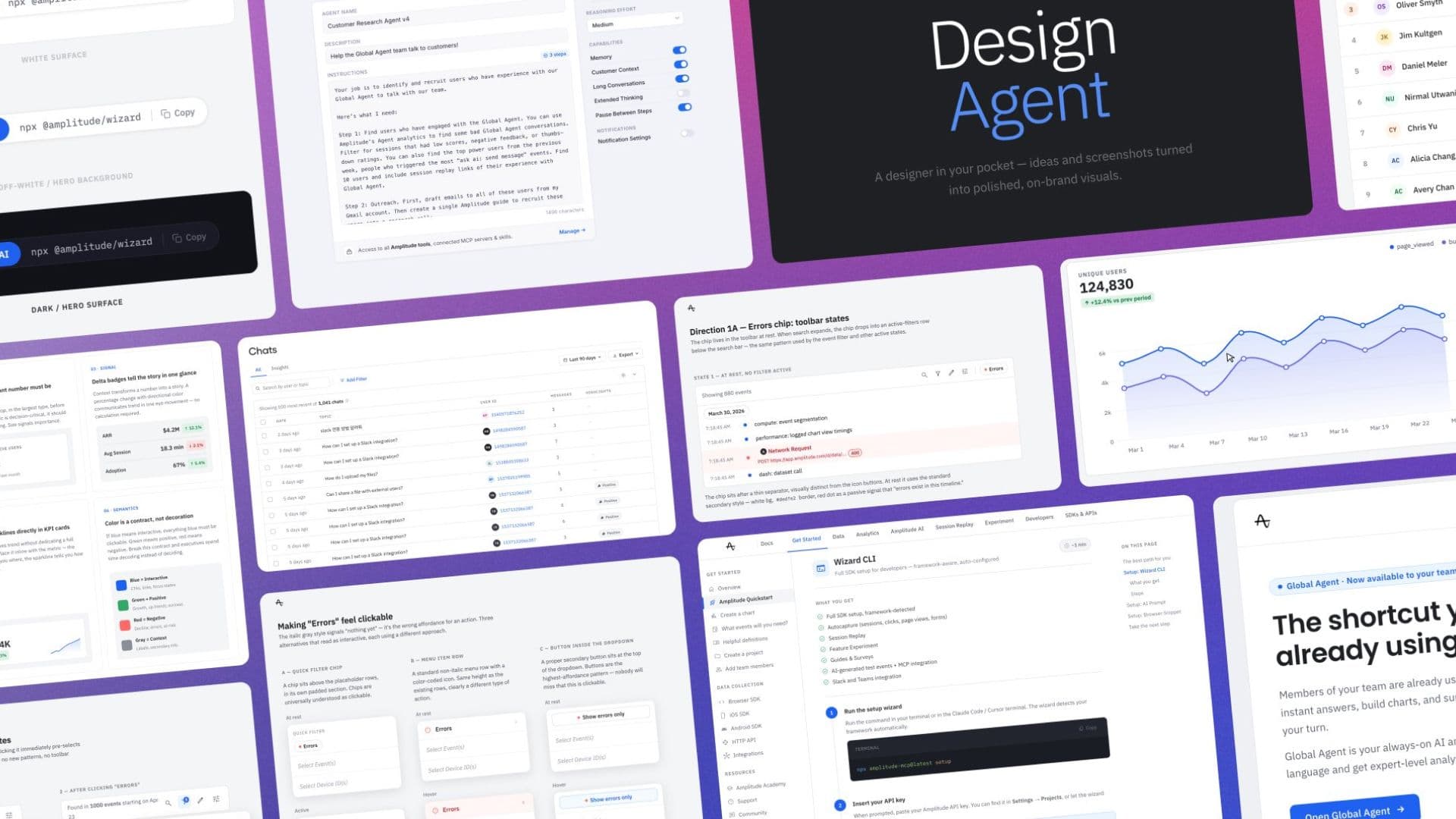

How We Built a Design Agent at Amplitude with Claude Managed Agents and Cloudflare

How we built Design Agent, what infrastructure choices make it fast, and what we’re learning now that people are actually using it

May 19, 2026

11 min read

Building the Validation Stack for AI Product Development

May 14, 2026

7 min read

Amplitude and Statsig Partnership

May 5, 2026

2 min read

AI Week 2026: Upleveling All Together

Apr 27, 2026

11 min read

Amplitude AI Builders: Paul Hultgren Chats about AI Assistant

Apr 24, 2026

10 min read

Creating the Intelligence Layer: Welcoming CPO Gab Menachem

Apr 14, 2026

6 min read

Amplitude at SXSW: Our AI Cookout for Startups

Apr 3, 2026

5 min read

Amplitude Named to Fast Company’s Most Innovative Companies of 2026

Mar 24, 2026

3 min read

Founders’ Awards: Celebrate the Winners of 2025

Mar 6, 2026

12 min read

Amplitude’s All-Star Weekend with the NBA Foundation: A Recap

Feb 26, 2026

5 min read

Why Hackathons Are the Best Kept Secret to Drive GTM Innovation

Feb 4, 2026

6 min read

Meet the Ampliteers: Values Awards Winners, Q3 2025

Feb 4, 2026

10 min read

Our Quest to Become AI-First and What We Learned

Jan 28, 2026

5 min read