Meet Amplitude AI Assistant: The Support Agent Your Product Deserves

An in-product support agent that knows your users, acts on their behalf, and measures whether it actually helped

Chatbots were built to eliminate support queues. For straightforward questions, those chatbots mostly did their job. But harder problems—the ones that actually drive churn—stayed hard.

Here’s an example that happens all too often: a user gets stuck a few days into onboarding and asks a chatbot for help. The chatbot searches a knowledge base and returns a help article, but it’s one the user has already read. So the user describes the problem differently. Same article. They try a third time. Same result. Eventually, they file a ticket and wait.

These chatbots have no idea what the user was doing when they got stuck, what they tried before asking, or whether anything the chatbot suggested actually worked afterward. They can look up answers, but they lack critical context about the product and the user.

This blind spot matters for the problems that matter most. The user who can’t figure out how to set up their account isn’t going to churn because your chatbot was slow. They’re going to churn because nobody noticed they were stuck in the first place.

That’s why we’re excited to release Amplitude AI Assistant.

How product data improves support agents

AI Assistant is an in-product support agent, built on top of our behavioral context layer. That means it knows what a user did before they asked a question, where they are in the product right now, and whether they completed their goal after getting help. In addition to your knowledge base, the support agent is now wired into the same data your product team uses to make decisions.

The practical difference shows up immediately. When a user asks, “How do I set up my account?” a different chatbot might return standard documentation. AI Assistant knows what page the user is on (the account page), their in-product history (no account created), and their behavioral context (failed to find the setup sidebar twice in the last five minutes). With this information, AI Assistant’s response is no longer generic. It’s personalized to the user’s specific circumstances.

From chatbots to co-browsing agents inside your product

Most support tools, even good ones, stop after providing an answer. They tell users what to do and trust them to execute. That’s enough when the task is simple. But when a user is dealing with a multi-step workflow or a feature they've never touched, they need more than just an answer. This is where AI Assistant shines. When a user needs step-by-step assistance, it can trigger an in-product guide directly from the conversation.

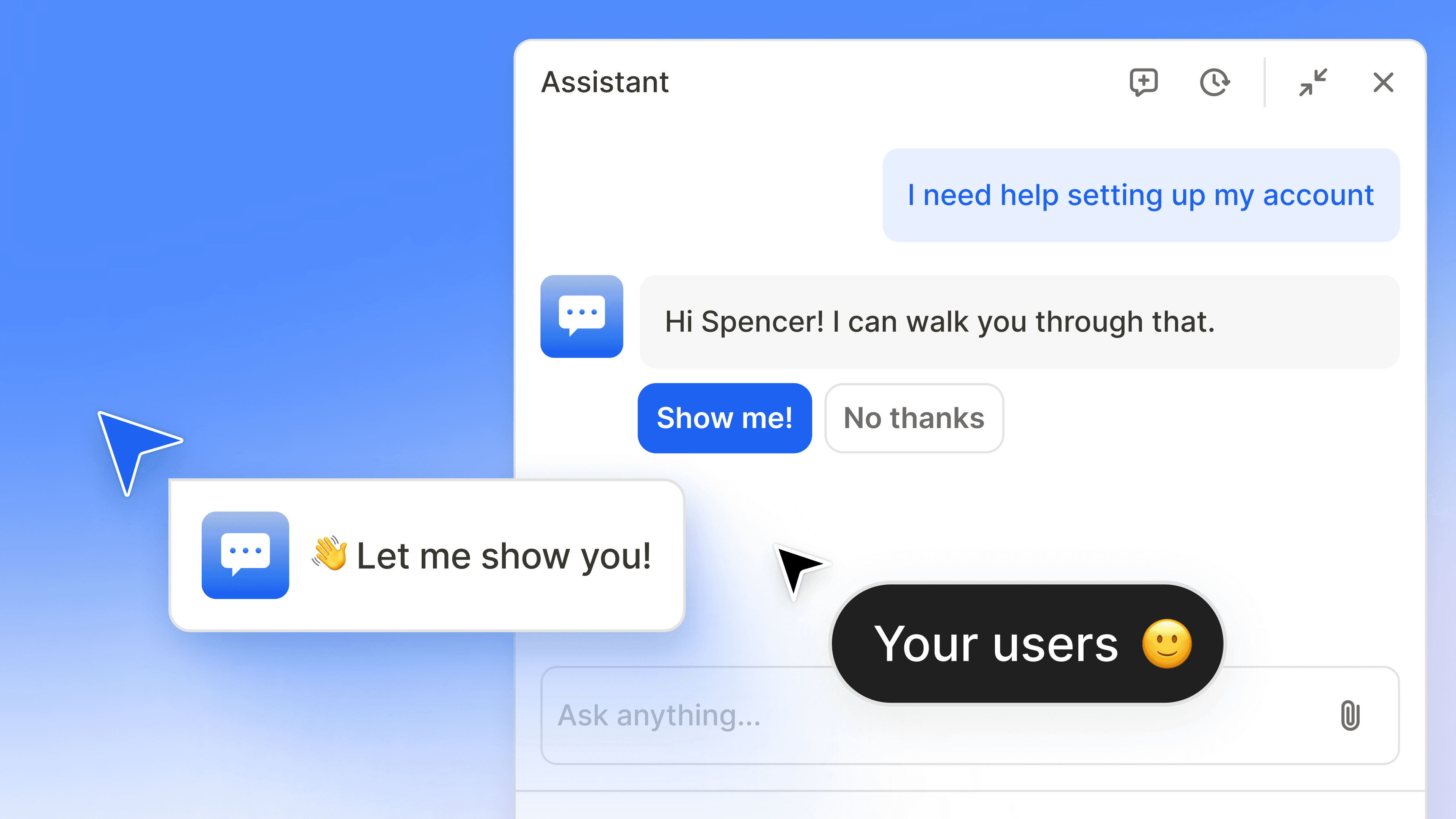

Consider the user asking about account setup. AI Assistant will explain the concept briefly, then ask: “Want me to walk you through it?” It can launch a guide that moves around the screen, highlighting each UI element in sequence. No new tab. No interpreting documentation. The user sees in-product instructions that help them complete their task.

And it goes further. For certain workflows, such as adding new users, AI Assistant can complete tasks autonomously on a user's behalf. The user goes from stuck to done without switching contexts or waiting on anyone.

Because AI Assistant connects to Amplitude's Guides and Surveys infrastructure, it has an execution layer inside the product. That capability means the AI can decide what to show, when to intervene, and how to get a specific user through a specific task. We’re building the foundation for an agentic co-browsing experience inside your product: AI Assistant understands your UI, understands where the user is in it, and can orchestrate what happens next.

Support personalization based on real user behavior

Most personalization in support is rule-based. New users get one script, enterprise users get another. These rules are coarse and handwritten, based on broad assumptions about who the user is.

AI Assistant personalizes support based on what users have actually done. A trial user who has explored three features in their first session gets different guidance than a trial user who signed up and never came back. A power user hitting an edge case in an advanced workflow gets a response that acknowledges their experience rather than starting from the basics. This happens automatically because the behavioral data is already there. No one has to imagine the scenarios and create custom rules.

The distinction matters because behavior is a better predictor of what someone needs than a persona tag. Someone labeled “enterprise admin” in a CRM might be a brand new hire figuring out the product for the first time. Someone tagged as a “new user” might not need the basics explained. The behavioral signal catches these nuances. Static attributes miss them.

For product teams, this also means fewer manual rules to maintain. As your product evolves and user patterns shift, the personalization adapts because it's grounded in what users are actually doing, not in segments someone defined six months ago.

“Our users don‘t want to stop what they're doing to look for help or file a support ticket. Amplitude’s AI Assistant gives us a way to meet users where they are, inside the product, with guidance that feels personal and immediate. We see this as the future of how we can better support our customers.”

Michelle Esquivel

Senior Manager of Global Documentation, Advanced Solutions International, Inc.

Knowing what happened before and after with Session Replay

Traditional support metrics are often shallow. They can tell you if the user clicked the thumbs-up icon or closed the chat window. These signals are OK, but they only tell you whether the interaction felt okay at the moment. They don’t tell you if the user's problem was actually resolved.

AI Assistant connects to Session Replay, which changes how product support teams track resolution. When a user starts a conversation, the system has behavioral context: what they clicked, where they hesitated, what error they encountered, etc. When the conversation ends, it can watch the session to see what the user does next. Do they complete the workflow? Do they hit the same wall again? Do they leave the product?

This makes it possible to measure true resolution, not just chat resolution. The question shifts from “Did the user feel satisfied?” to “Did the user accomplish what they were trying to do?” That’s a meaningful difference for any team trying to understand whether their support experience is actually working.

When a conversation does need to escalate to a human support agent, the handoff includes the full picture: conversation transcript, a summary of what the user was doing before the chat, and the session replay context. The human support agent doesn’t start blind. Escalation becomes a more natural transition.

Conversations that feed back into the product data

Support conversations are full of qualitative signals that product teams rarely act on. Users describe what confuses them in their own words. They articulate friction that doesn't show up cleanly in event data. They reveal the gap between what the product intends and what users experience.

In most support setups, these signals sit in a CX inbox. Someone might parse through the data to make it useful. More often, it gets summarized in a quarterly report and forgotten by the next sprint.

AI Assistant conversations flow into Amplitude’s AI Feedback system, where unstructured feedback data gets structured insight alongside your analytics and session replay data. Product teams can see patterns: which features generate the most questions, which onboarding steps cause repeated confusion, which error states users describe but your instrumentation doesn't capture, etc.

Chat transcripts become part of the same product intelligence system your team already uses to decide what to build, what to fix, and where to invest. A spike in questions about a particular workflow is a signal. Paired with behavioral data showing where users drop off in that workflow, it becomes a clear action item.

Closing the feedback loop

There is an important pattern that runs through everything AI Assistant does: collect context, understand the situation, take action, and then measure whether the action worked. This is the same loop product teams already run with Amplitude for feature development and experimentation.

AI Assistant together with the power of the Amplitude platform surfaces insights such as which topics does the assistant handle well? Where does it fall short? Are users who interact with the assistant more likely to complete onboarding, adopt a new feature, or stay active? And because this data lives inside Amplitude, teams can act on what they find. Update a guide. Run an experiment. Fix the underlying product issue that keeps generating questions.

Over time, this compounds. The assistant handles support. The conversations reveal where users struggle. The team fixes those issues. The assistant fields fewer questions about those topics, which frees it up to handle new ones. The product gets better because support is no longer a dead end for user feedback.

That loop, where a support interaction at 2 p.m. leads to a product fix by end-of-day is the kind of thing that was theoretically possible before but required stitching together different tools and a lot of manual triage. With AI Assistant, it's built into the platform.

See AI Assistant for yourself

AI Assistant is now available to customers.

To see AI Assistant in action, register for our upcoming launch event, Introducing Amplitude AI Assistant, where we'll walk through the answer-to-action workflow, showcase the session replay integration, and discuss how organizations are turning conversations into product improvements.

Spencer Whittaker

Senior AI Product Manager

Spencer Whittaker is a senior AI product manager at Amplitude. He focuses on using AI to advance Amplitude's mission of helping companies build better products.

More from SpencerRecommended Reading

Tracing the Sale: Connect Behavior to Conversions with Persisted Properties

May 28, 2026

7 min read

Building CLI Agents: It’s What You Don’t Give Them That Counts

May 27, 2026

6 min read

Three Tips for Better Prompts in Amplitude Global Agent

May 26, 2026

9 min read

How AI Took the Data Analyst’s Job, and Created a Better One

May 22, 2026

8 min read